Open Access to the Universe:the Largest and Most Sophisticated Cosmological Simulation is Now in Open Access Domain

A small team of astrophysicists and computer scientists have created some of the highest-resolution snapshots yet of a cyber version of our own cosmos. The data which is a result of one of the largest and most sophisticated cosmological simulations is now open to public. People can observe it and researchers can use it to develop their theories and conduct varies kind of astrological and cosmological researches.

………………………………………………………………………………..

A small team of astrophysicists and computer scientists have created some of the highest-resolution snapshots yet of a cyber version of our own cosmos. Called the Dark Sky Simulations, they’re among a handful of recent simulations that use more than 1 trillion virtual particles as stand-ins for all the dark matter that scientists think our universe contains.

They’re also the first trillion-particle simulations to be made publicly available, not only to other astrophysicists and cosmologists to use for their own research, but to everyone. The Dark Sky Simulations can now be accessed through a visualization program in coLaboratory, a newly announced tool created by Google and Project Jupyter that allows multiple people to analyze data at the same time.

To make such a giant simulation, the collaboration needed time on a supercomputer. Despite fierce competition, the group won 80 million computing hours on Oak Ridge National Laboratory’s Titan through the Department of Energy’s 2014 INCITE program.

In mid-April, the group turned Titan loose. For more than 33 hours, they used two-thirds of one of the world’s largest and fastest supercomputers to direct a trillion virtual particles to follow the laws of gravity as translated to computer code, set in a universe that expanded the way cosmologists believe ours has for the past 13.7 billion years.

“This simulation ran continuously for almost two days, and then it was done,” says Michael Warren, a scientist in the Theoretical Astrophysics Group at Los Alamos National Laboratory. Warren has been working on the code underlying the simulations for two decades. “I haven’t worked that hard since I was a grad student.”

Back in his grad school days, Warren says, simulations with millions of particles were considered cutting-edge. But as computing power has increased, particle counts did too. “They were doubling every 18 months. We essentially kept pace with Moore’s Law.”

When planning such a simulation, scientists make two primary choices: the volume of space to simulate and the number of particles to use. The more particles added to a given volume, the smaller the objects that can be simulated-but the more processing power needed to do it.

Current galaxy surveys such as the Dark Energy Survey are mapping out large volumes of space but also discovering small objects. The under-construction Large Synoptic Survey Telescope “ill map half the sky and can detect a galaxy like our own up to 7 billion years in the past,” says Risa Wechsler, Skillman’s colleague at KIPAC who also worked on the simulation. “We wanted to create a simulation that a survey like LSST would be able to compare their observations against.”

The time the group was awarded on Titan made it possible for them to run something of a Goldilocks simulation, says Sam Skillman, a postdoctoral researcher with the Kavli Institute for Particle Astrophysics and Cosmology, a joint institute of Stanford and SLAC National Accelerator Laboratory. “We could model a very large volume of the universe, but still have enough resolution to follow the growth of clusters of galaxies.”

The end result of the mid-April run was 500 trillion bytes of simulation data. Then it was time for the team to fulfill the second half of their proposal: They had to give it away.

They started with 55 trillion bytes: Skillman, Warren and Matt Turk of the National Center for Supercomputing Applications spent the next 10 weeks building a way for researchers to identify just the interesting bits-no pun intended-and use them for further study, all through the Web.

“The main goal was to create a cutting-edge data set that’s easily accessed by observers and theorists,” says Daniel Holz from the University of Chicago. He and Paul Sutter of the Paris Institute of Astrophysics, helped to ensure the simulation was based on the latest astrophysical data. “We wanted to make sure anyone can access this data-data from one of the largest and most sophisticated cosmological simulations ever run-via their laptop.”

Source Symmetrymagazine

he government of the New South Wales (NSW) has put a single portal data set repository to access government data. According to the statement fromthe finance minster Dominic Perrottet, the data.nsw.gov.au portal offers a new way for people to access data.

The new site, powered by the Open Knowledge Foundation’s open source software, has 353 data sets listed. The data set portal also incorporates various agencies case studies and examples.

The site has attracted record number of requests since it has been launched. For instance search in the NSW State Records jumped from 7129 in May 2013 to 17,229 in the same period in 2014.

The data set is in a format which can be easily accessible and searchable. Moreover, the state government formulated a strategy aims to promote public and companies to use publicly available geospatial data sets while further enriching them by adding geospatial data on the state’s social and economic data sets. The state government made the data set available and accessible under the NSW Government Information Act of 2009. It encourages agencies to make their data openly accessible in accordance with the Act unless there is a specific and overriding reason not to release the data.

Indian research funding agencies have adopted an open access policy which calls for mandatory open access publishing of publicly funded scholarly output. The two agencies, the Department of Biotechnology (DBT) and the Department of Science and Technology (DST), both under the Ministry of Science, have released open access policy documents. According to the Telegraph, the documents remain in scientific community cycle’s circulation for comment till July 25, 2014. By endorsing open access publishing model DBT and DST joined other two Indian research funding agencies, Indian Council of Agricultural Research (ICAR) and Council of Scientific and Industrial Research (CSIR), which took similar policy decisions in the past. The decision of these national research funding agencies is expected to encourage similar agencies, research institutions and academicians to fully embrace open access movement and policies. Furthermore, the measure will boost scientific research and knowledge dissemination.

Research outputs are typically published on journals which obligate readers and librarians pay costly subscription fees. Nevertheless, making research funded by tax payer’s money freely available and widely accessible removes barriers and opens more knowledge and information windows for scientific and nonscientific communities alike. Open Access model is touted as an alternative to classic scientific journal publishing because the later keeps knowledge behind paywalls.

Open Access movement is reaching research funding agencies and scientific community in every corner of the world. Open access movement is no longer a cause that only few groups and individuals advocate for. The movement has become so successful that many countries and research funding agencies have come on board. The call for making publicly funded research output openly accessible is apparently coming from every angle. The European Union through its Horizon 2020 policy and the US are the major players in this regard. Developing nations are also following suit. Therefore, they have started embracing the movement and formulating open access policies which facilitate smooth implementation and transition.

UNESCO Lunches African Open Access Project

UNESCO launches African Open Access (OA) project which primarily focuses on three sub-regions: East, West and North. The project will benefit scientific organizations, researchers, students of higher institutions, and science technology and innovation systems in various countries. It will be implemented in partnership with various universities and organizations. UNESCO allocated an estimated budget of 2.1 million USD for the project that will be implemented in 2014/16.

The project’s aim is to accomplish the folowing goals: examining an inclusive and participatory modality to implement Open Access to Scientific Information and Research, developing approaches for upstream policy advice, and building partnerships for OA and strengthening capacities at various levels to foster OA. During implementation the project will undertake survey on the possibilities of setting Pan-African OA standard. It will also organize international congresses on OA in Africa and release Open Access toolkit for promotion of OA journals and repositories. Moreover, the project will carry out policy research for evidence-based policy making, and developing a reliable set of indicators for measuring the impact of Open Access.

As a result of this project, UNESCO expects beneficiary countries to adopt OA policies, member states educational institutions use OA curricula for training librarians and young researchers. UNESCO also anticipates key stakeholders in OA will actively participate in OA knowledge-based community and create a regional, mechanism for South-South Collaboration.

According to UNESCO, access to knowledge is causing lapses in providing economic security, literacy, and creating opportunities for innovations in the continent. There is a widely regarded “Knowledge challenge”. Hence, UNESCO facilitates ways through which open contents, processes and technologies benefit people in Africa. The organization, likewise, endeavors to make sure that information and knowledge are inclusive and widely shared in order to benefit everyone in the continent.

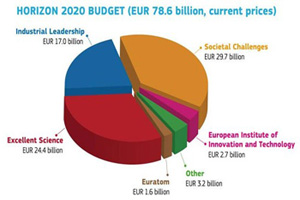

The European Commission (EC) inundated with a record number ofHorizon 2020 Research and Innovation applications. Just in the first round calls, the EC has received 16,000 applications. According to Robert-Jan Smits, Director–General of Research and Innovation speaking at the EuroScience Open Forum (ESOF) 2014, in Copenhagen, the number of applications received has shown growth of about a factor of nine. The Commission initially set a total of €15 billion for this round of calls. It received applications worth nine times what is allocated for, however.

The oversubscription rate of Horizon 2020 first round calls doubled that of the previous research program- the Seventh Framework Program (FP7). The success rate of Horizon 2020 applications is expected to fall to 11 percent. This is due to the surge in proposals. One reason behind driving the number of applications this time so high, Smits suggested, could be the cut in public research budgets in countries like Italy and Spain.

Horizon 2020 was designed in such a way that it brings industry back in the game. There are indications that the Commission has already registered notable success in doing this- 44 percent of the first round proposals came from industry. This is significant jump from where it was under FP7- 29 percent. Moreover, Horizon 2020 policy targets engaging Small and Medium-sized Enterprises (SMEs) companies and channeling research funding SMEs. In this front too, the policy appears to be working- half of all Horizon 2020 industry applications came from this sector.

Horizon 2020 first round calls attracted record number of women applicants. Under this program the percentage of women research applicants account for 23 percent. This shows 3 percent increase from the previous program (FP7). Rise in women participation in horizon 2020 is not only limited to proposal submission. Moreover, the percentage of women evaluating submitted proposals account for 40 percent of the evaluators. This meets the target set by the Commission.

According to Smits, research proposals oversubscription overwhelmingly came from health topics. Other oversubscribed topics are food, ICT and cyber-security.